|

I am a color&luma ISP research engineer, with my enthusiasm on making realtime low-ppa Computational photography algorithms and its product implementation. I work for DJI/HasselBlad now. I am an ex-Huawei&Honor&MicroBT engineer. I work on a bunch but still quite narrow ISP problems, for example auto-white balance (awb), illumination reflentance spectra estimation, chromatic adaption, blc lsc ccm ae and so on. I graduated from Phd in Tampere University in December 2020, where I was supervised by Prof. Joni-Kristian Kämäräinen and Prof. Jiri Matas. |

|

|

|

|

Yidi Shao, Yanlin Qian(corresponding author) ICASSP,2026 For the task of edge detection in a noisy setting, we propose an unadorned method, called PPCE, modifying the classic Canny Edge by using a Pull-Push non-local means (PP-NLM) denoising framework. |

|

Ping Chen, Zezhou Chen, Xingpeng Zhang, Yanlin Qian, Huan Hu, Xiang Liu, Zipeng Wang, Xin Wang, Zhaoxiang Liu, Kai Wang, Shiguo Lian CVPR,2026 Current 2D-to-3D conversion methods achieve geometric accuracy but are artistically deficient, failing to replicate the immersive and emotionally resonant experience of professional 3D cinema. This is because "geometric reconstruction" paradigms mistake deliberate artistic intent—such as strategic zero-plane shifts for "pop-out" effects and local depth sculpting—for data "noise" or ambiguity. This paper argues for a new paradigm: \textbf{Artistic Disparity Synthesis}, shifting the goal from physically accurate disparity estimation to artistically coherent disparity synthesis. We propose Art3D, a preliminary framework exploring this paradigm. |

|

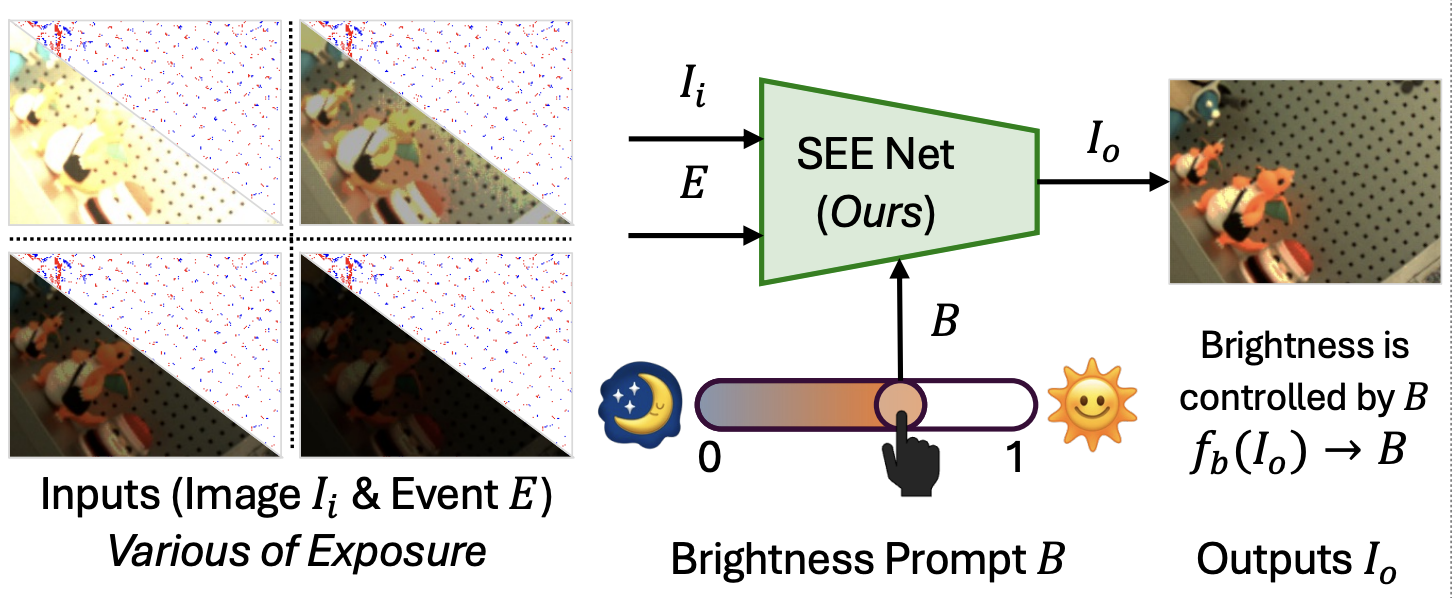

Yunfan Lu,...,Yanlin Qian,... IJCV,2025 We propose a novel research question: how to employ events to enhance and adaptively adjust the brightness of images captured under broad lighting conditions? |

|

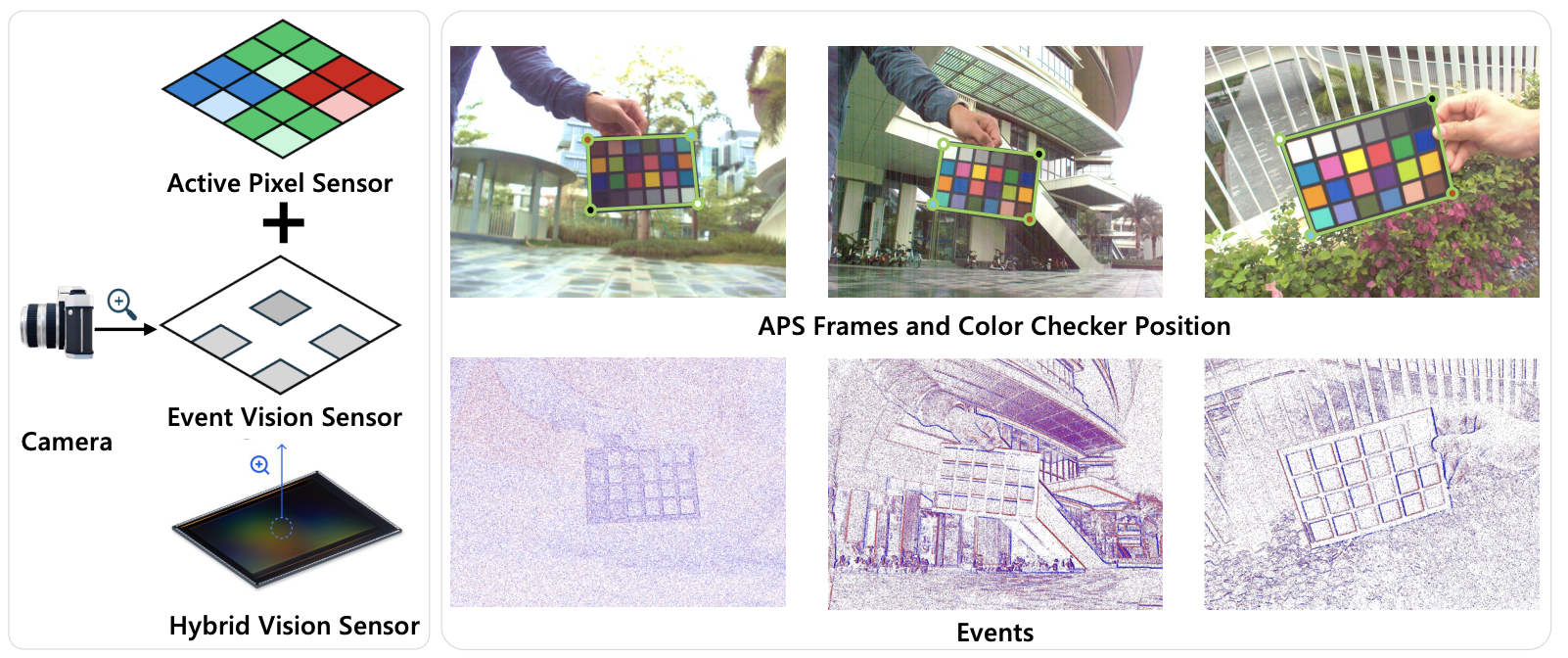

Yunfan Lu, Yanlin Qian, Ziyang Rao, Junren Xiao, Liming Chen, Hui Xiong∗ ICLR,2025 we present a new event-RAW paired dataset, collected with a novel but still confidential sensor that records pixel-level aligned events and RAW images. This dataset includes 3373 RAW images with 2248×3264 resolution and their corresponding events, spanning 24 scenes with 3 exposure modes and 3 lenses. Second, we propose a conventional ISP pipeline to generate good RGB frames as reference. |

|

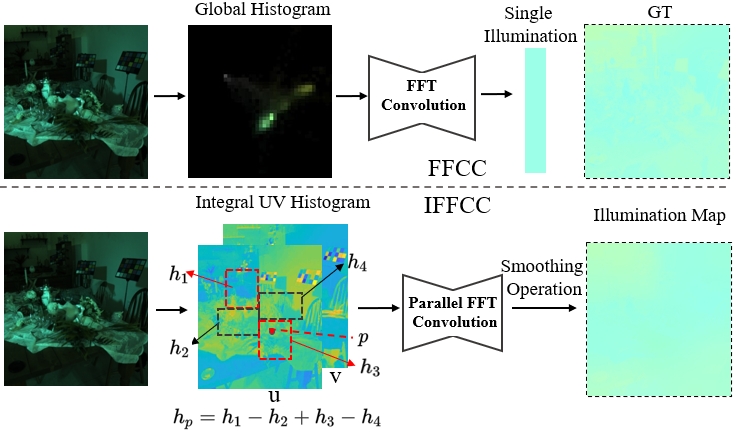

Wenjun Wei, Yanlin Qian(Equal First), Huaian Chen, Junkang Dai, Yi Jin CVPR,2025 We propose Integral Fast Fourier Color Constancy (IFFCC), an extension of FFCC tailored for multi-illuminant scenes. |

|

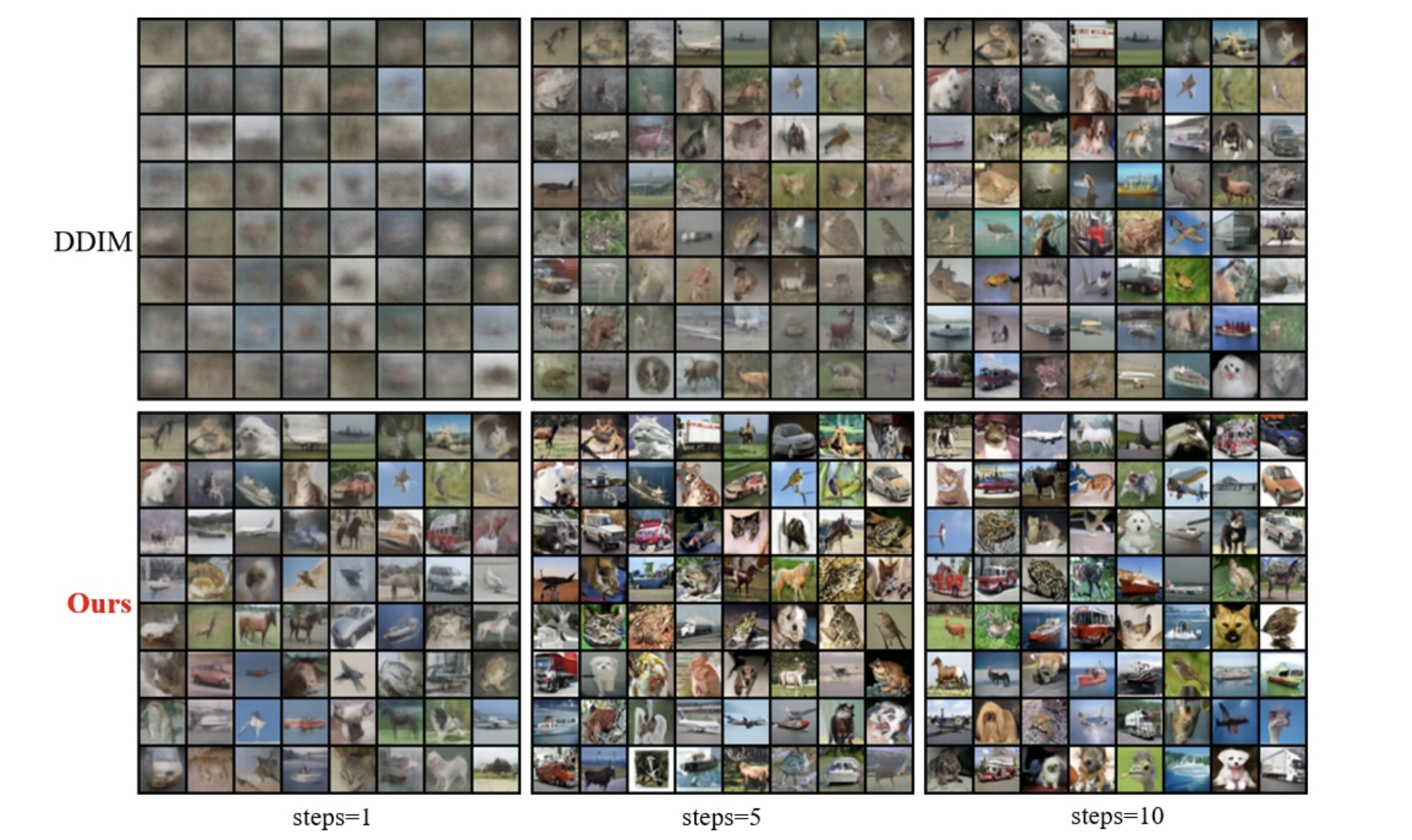

Ping Chen, ..., Yanlin Qian, ... CVPR,2025 we propose a novel denoising diffusion model based on shortest-path modeling that optimizesresidual propagation to enhance both denoising efficiency and quality. Drawing on Denoising Diffusion Implicit Models (DDIM) and insights from graph theory, our model, termed the Shortest Path Diffusion Model (ShortDF), treats the denoising process as a shortest-path problem aimed at minimizing reconstruction error. By optimizing the initial residuals, we improve the efficiency of the reverse diffusion process and the quality of the generated samples. Extensive experiments on multiple standard benchmarks demonstrate that ShortDF significantly reduces diffusion time (or steps) while enhancing the visual fidelity of generated samples compared to prior arts. This work, we suppose, paves the way for interactive diffusion-based applications and establishes a foundation for rapid data generation. |

|

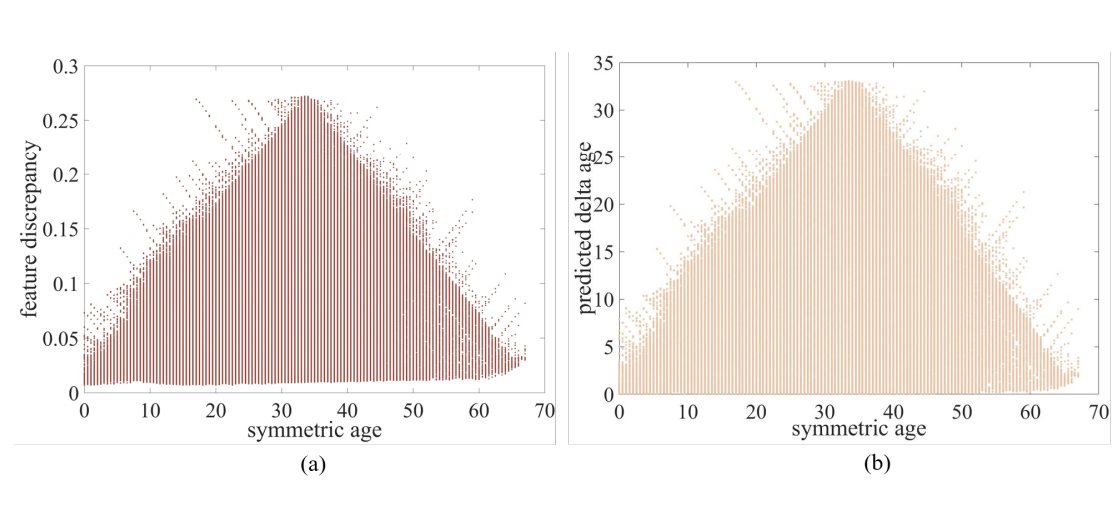

Ping Chen, Xingpeng Zhang, Chengtao Zhou, Dichao Fan, Peng Tu, Le Zhang, Yanlin Qian(corresponding author) CVPR,2024 Built on trigular distribution, we learn an injective function mapping feature difference to label difference linearly. We prove it useful in 3 tasks: Facial Age Recognition, Illumination Chromaticity Estimation, and Aesthetics assessment. |

|

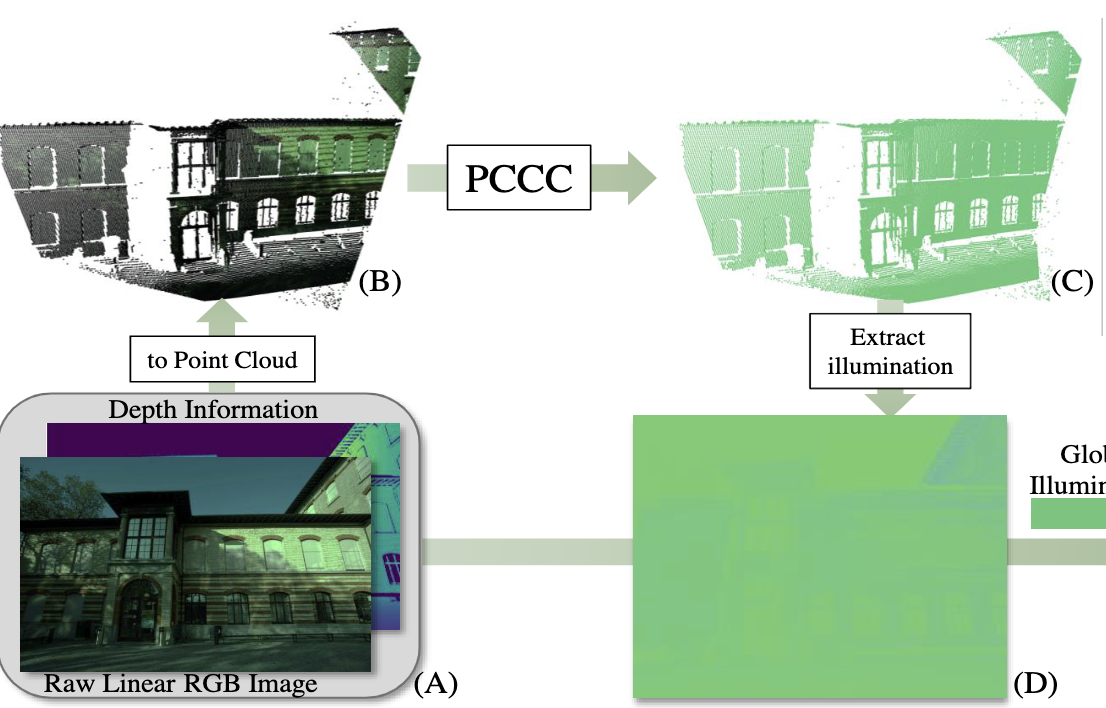

Xiaoyan Xing, Yanlin Qian(Equal First),Sibo Feng, Yuhan Dong, Jiri Matas CVPR,2022 The first awb work tacking awb problem in the world of the 3d point cloud, captured with rgb sensor and a TOF sensor. |

|

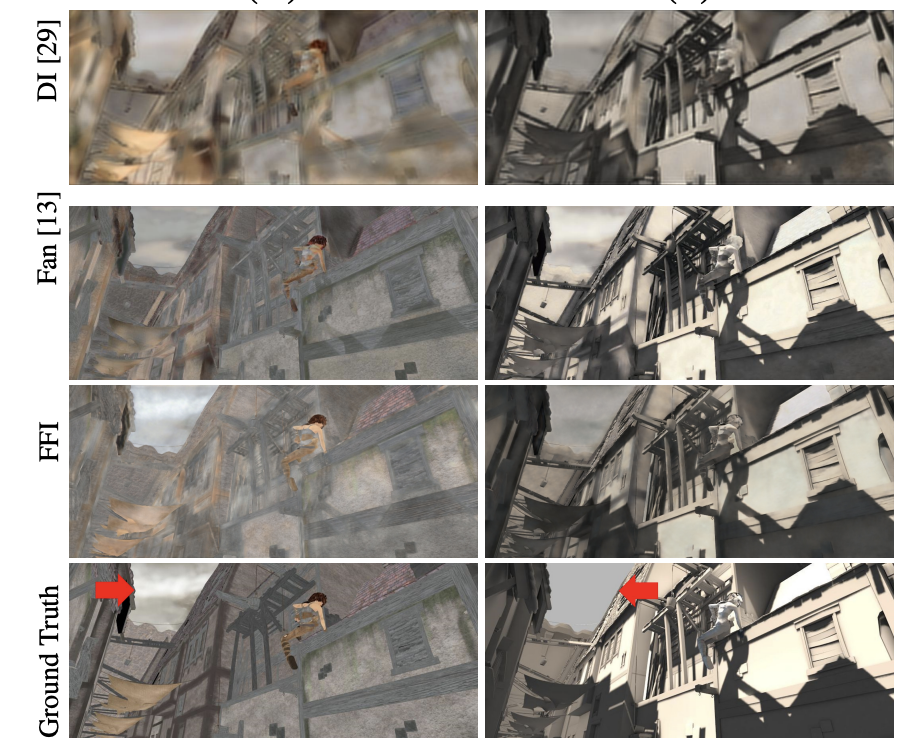

Yanlin Qian, Miaojing Shi, Joni-Kristian Kämäräinen, Jiri Matas WACV,2020 To address the problem of decomposing an image into albedo and shading, we propose the Fast Fourier Intrinsic Network, FFI-Net in short, that operates in the spectral domain, splitting the input into several spectral bands. |

|

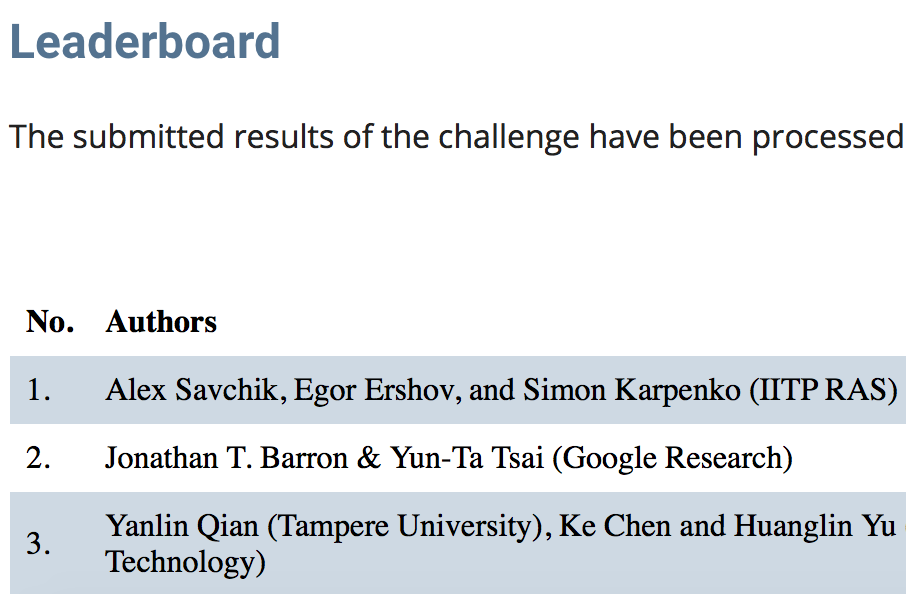

Yanlin Qian, Sibo Feng, Kang Qian, Miaofeng Wang ICMV illumination estimation challenge,2020 We propose a neural network-based solution for three different tracks of 2nd International Illumination Estimation Challenge. SDE-AWB obtains 1st place in both indoor and two-illuminant tracks and 2nd place in general track. |

|

Yanlin Qian, Jani Käpylä, Joni-Kristian Kämäräinen, Samu Koskinen, Jiri Matas ECCV workshop 2020 bibtex/ data/ code Revisiting Temporal color constancy. We propose a 600-video temporal color constancy dataset, and a smaller better faster net called TCC-Net. |

|

Huanglin Yu, Ke Chen, Kaiqi Wang, Yanlin Qian, Zhaoxiang Zhang, Kui Jia AAAI,2020 code/ bibtex We cascade SqueezeNet-based bricks obtain SotA results on Gehlershi and NUS 8-camera datasets. |

|

|

Yanlin Qian, Joni Kämäräinen, Jarno Nikkanen, Jiri Matas CVPR, 2019 code/ supplement / bibtex Gray (achromatic) pixels can be used for illumination estimation. This paper tells how to pick up them ACCURATELY. |

|

|

Yanlin Qian, Said Pertuz, Joni Kämäräinen, Jarno Nikkanen, Jiri Matas VISSAP, 2019 code / bibtex Gray (achromatic) pixels can be used for illumination estimation. This paper tells how to pick up them using Clustering. |

|

|

Yanlin Qian, Song Yan, Joni Kämäräinen, Jiri Matas ICIP, 2019 bibtex Gray (achromatic) pixels can be used for illumination estimation. This paper tells how to pick up them with the help of flash photography. |

|

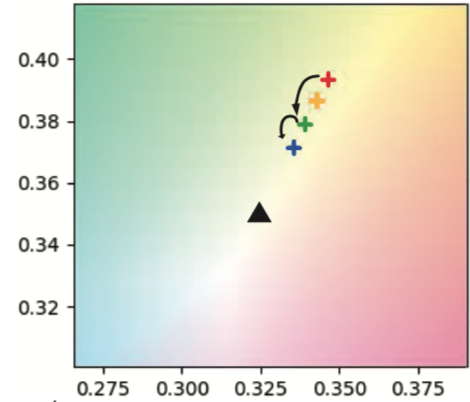

Yanlin Qian,Ke Chen, Huanglin Yu ISPA, International Workshop on Color Vision, 2019 bibtex / Leaderboard Page We briefly introduce two submissions to the Illumination Estimation Challenge, in the Int'l Workshop on Color Vision, affiliated to the 11th Int'l Symposium on Image and Signal Processing and Analysis. The fourier-transform-based submission is ranked 3rd, and the statistical Gray-pixel-based one ranked 6th. |

|

Yanlin Qian, Ke Chen, Joni Kämäräinen, Jarno Nikkanen, Jiri Matas ICCV, 2017 bibtex Temporal color constancy: measuring the illumination of the captured image based on image deque. |

|

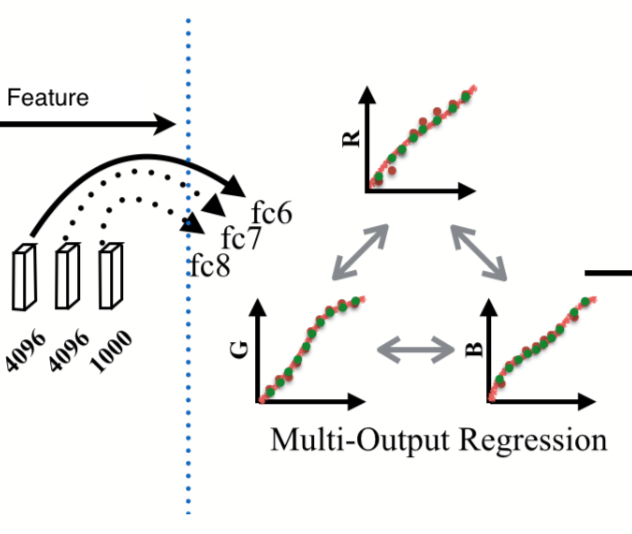

Yanlin Qian, Ke Chen, Joni-Kristian Kamarainen, Jarno Nikkanen, Jiri Matas ICPR, 2016 bibtex Illumination estimation based on VGG and AlexNet feature map and multi-output regression. This is my first paper. |

|

|

|

|